Key Takeaways

- Only 27% of architecture, engineering, and construction (AEC) firms currently use AI, yet 94% of those who do plan to expand usage—creating a widening gap between early movers and everyone else. (Source: Bluebeam AEC Technology Outlook 2026)

- Over 80% of organizations report no meaningful bottom-line impact from AI because they layer tools onto broken processes instead of redesigning workflows first. (Source: McKinsey, State of AI 2025)

- The firms seeing real ROI treat AI integration like an engineering project: feasibility first, then front-end engineering, then build. Skipping phases guarantees waste.

- Gartner predicts more than 40% of agentic AI projects will be canceled by 2027 due to escalating costs and unclear value—most often because organizations skipped foundational work. (Source: Gartner, June 2025)

The West Texas Lesson That Changed How I Think About AI

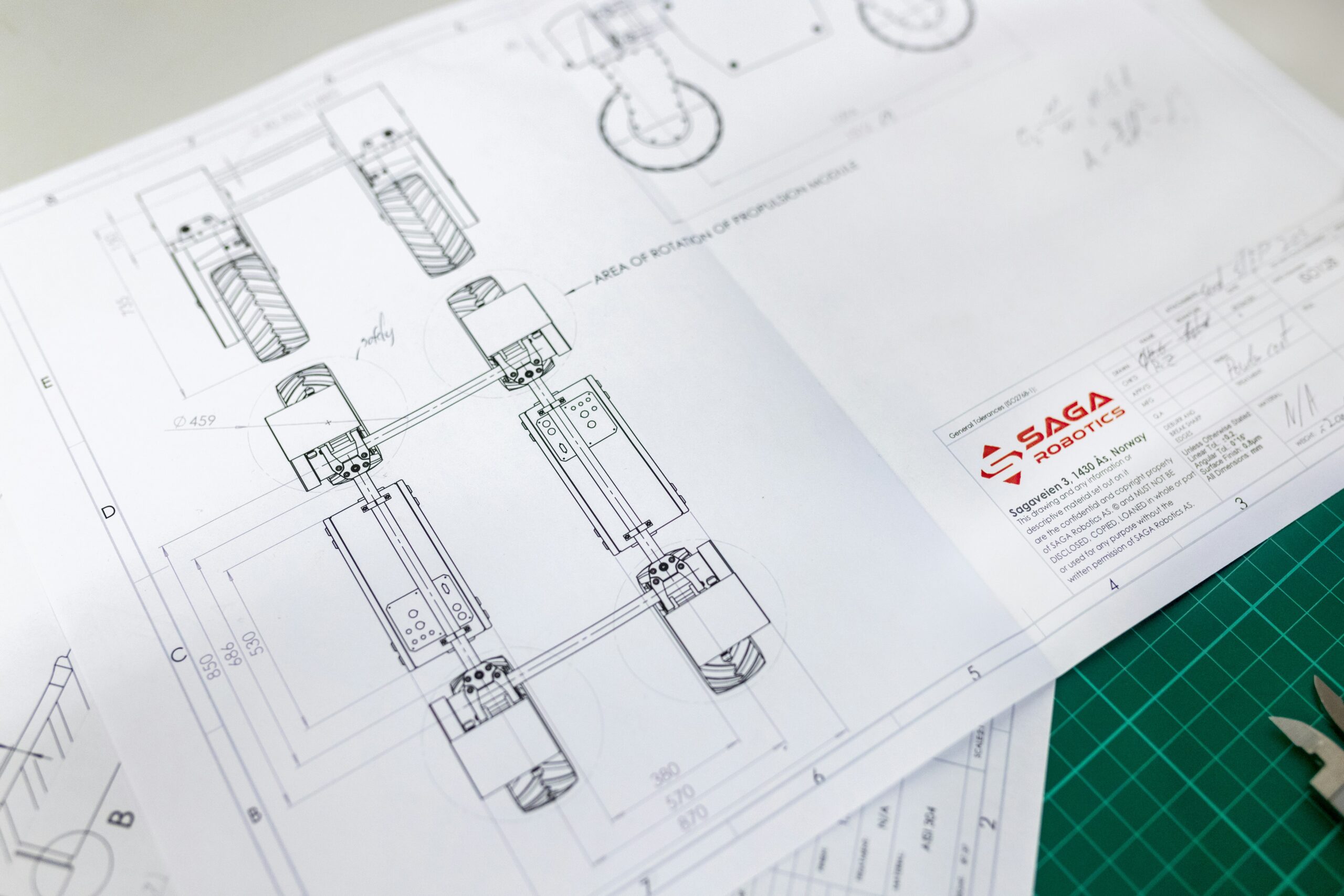

In 2023, I was part of a team tasked with as-building a series of facilities across West Texas. These were active production sites—energy and process facilities that needed upgrade projects to improve efficiency and increase throughput. Before we could touch a single piece of equipment, we had to understand exactly what we were working with.

We walked every line. We gathered every P&ID. We interviewed operators who had been running those systems for years. We documented the as-built condition of piping, instrumentation, and controls before drafting a single modification.

That experience keeps coming back to me now, because the process we used to prepare those facilities for upgrades is nearly identical to what works when integrating AI into an engineering-powered business. And the research confirms it: the firms that skip the foundational work are the ones burning money and canceling projects.

The Data Is Clear: Most AI Projects Fail Because They Skip the Foundation

McKinsey’s 2025 Global Survey on the State of AI, based on nearly 2,000 respondents across 105 countries, found that 88% of organizations now report using AI in at least one business function. That sounds like progress. But dig one layer deeper and the picture changes.

Only about one-third of those organizations have scaled AI beyond pilots. Just 39% report any enterprise-level financial impact from AI. And among organizations using generative AI specifically, more than 80% say they are not seeing meaningful bottom-line results. (Source: McKinsey, State of AI 2025)

The reason is consistent across the research: organizations are buying software and bolting it onto existing processes without redesigning those processes first. McKinsey found that AI high performers—the roughly 6% of companies seeing 5% or more of their EBIT attributable to AI—are nearly three times more likely than peers to have fundamentally redesigned their workflows. Workflow redesign was one of the single strongest factors distinguishing high performers from everyone else.

In engineering terms, they did the front-end engineering before they started the build. Everyone else skipped straight to procurement.

Gartner reinforced this in June 2025 with a pointed prediction: more than 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, and inadequate risk controls. Gartner’s Senior Director Analyst Anushree Verma was direct about the cause, noting that most agentic AI projects are early-stage experiments driven by hype and often misapplied. (Source: Gartner, June 2025)

And the AEC industry sits at an inflection point. Bluebeam’s 2026 AEC Technology Outlook, based on a global survey of over 1,000 AEC professionals, found that only 27% of firms currently use AI for automation, problem-solving, or decision-making. Among those who do, 68% have saved at least $50,000 and nearly half reclaimed 500 to 1,000 hours. Yet 65% of all firms surveyed invest less than 10% of their technology budgets on training. (Source: Bluebeam, October 2025)

The gap between the firms investing in foundational work and those just buying tools is widening fast.

The 3-Phase Approach: AI Integration as an Engineering Project

After implementing AI across 40+ engineering and technical business projects, I’ve found that successful integration follows the same phases as any well-run engineering upgrade project. The firms that treat it this way see measurable ROI. The firms that skip phases see canceled projects, wasted budgets, and frustrated teams.

Phase 1: Feasibility and Education (The “Crawl”)

When we showed up in West Texas, we did not start designing modifications on day one. We started by learning. We reviewed the original design documentation. We talked to the people operating the facilities every day. We built a shared understanding of what the systems actually did and how they performed under real conditions before we considered what to change.

AI integration works the same way. Before your team can implement AI into workflows, they need a practical, grounded understanding of what AI can and cannot do for engineering work. Not the hype. Not the vendor pitches. The actual capabilities and limitations applied to the work they do every day.

In our practice, this phase includes hands-on training with engineering-specific applications: documentation, calculations, communications, project controls, and proposal development. The goal is not to make everyone an AI expert. The goal is to build enough shared understanding across the team so that the next phases produce informed decisions instead of guesswork.

This is where many firms already start saving 3 to 8 hours per person per week on routine tasks, and that recovered time funds the deeper work that comes next.

Phase 2: Front-End Engineering and Process Intelligence (The “Walk” and “Run”)

Back in West Texas, once we understood the as-built condition, we moved into front-end engineering. We mapped every process flow. We identified the constraints, the bottlenecks, and the equipment that was limiting throughput. Only after that mapping was complete did we start evaluating what to modify and how.

This is the phase most firms skip when it comes to AI, and it is the single biggest reason their projects fail.

In our methodology at Obnovit, this phase involves comprehensive workflow mapping within the business to identify the highest-value processes to target. We conduct interviews. We map the as-built workflow: the data, tools, resources, institutional knowledge, standard operating procedures, handoffs, reviews, and approval chains. We document who does what, when, and why.

Then we optimize the process using Lean principles and Theory of Constraints before we ever consider where AI fits. We eliminate waste. We identify the true constraint that is limiting capacity or revenue. We design the future-state workflow. And only then do we evaluate where AI can accelerate that optimized process and where human expertise must stay in the loop.

This is exactly what McKinsey’s research supports. The companies seeing real financial impact from AI are not just deploying tools. They are redesigning workflows around what people, AI, and automation each do best. McKinsey’s research found that this intentional workflow redesign has one of the strongest contributions to achieving meaningful business impact of all the factors they tested. (Source: McKinsey, State of AI 2025)

Phase 3: Build, Integrate, and Scale (The “Sprint”)

In West Texas, once the front-end engineering was complete and approved, we moved to construction. We knew what we were building, why we were building it, and what the expected performance outcomes would be. The build phase was efficient because the engineering was solid.

AI integration follows the same logic. Once you have the optimized workflow mapped and the AI integration points identified, the build phase involves a clear buy-versus-build decision: will you use external experts and vendors to build the solutions, develop them in-house with internal capacity, or some combination of both?

We work with our clients through that decision and can support the build and integration step when needed. The key is that this phase comes third, not first. You are building a specific solution against a documented process with defined inputs, outputs, and KPIs—not experimenting with tools hoping something sticks.

The Comparison: Why Order Matters

| Approach | What Happens | Typical Outcome |

|---|---|---|

| Tool-first (buy AI, figure it out later) | Team experiments with disconnected tools on undefined workflows | Joins the 40%+ of canceled AI projects (Gartner, 2025). No measurable ROI. |

| Education-first, then process, then build | Team gains shared understanding, workflows are mapped and optimized, AI is integrated at specific high-value points | Measurable time savings (3–8 hrs/week per person), clear ROI, scalable system |

The difference is not subtle. One approach treats AI as a software purchase. The other treats it as an engineering project with phases, deliverables, and accountability at each stage.

What You Can Do This Week

You do not need to overhaul your entire operation to start making progress. Here are three actions any engineering firm leader can take right now:

- Audit where your team’s time actually goes. Track one week of how your engineers split their time between high-value engineering work (design, analysis, client-facing problem solving) and lower-value tasks (documentation formatting, data entry, repetitive communications, administrative coordination). Most firms discover that 40 to 60% of engineering time goes to work that AI could accelerate.

- Pick your most painful bottleneck and map it. Choose the single workflow that creates the most frustration, delay, or cost in your operation. Map it end-to-end: every step, every handoff, every review cycle. Before you look at any AI tool, ask where you can eliminate steps, reduce handoffs, or remove redundant reviews. The best AI integration point will become obvious once the process is clear.

- Invest in foundational AI skills for your team before investing in AI tools. The Bluebeam survey found that 65% of AEC firms invest less than 10% of tech budgets on training. That is a recipe for shelfware. When your team understands what AI can do within their specific workflows, they become the ones identifying the best applications—not outside vendors.

The Window Is Open, But It Is Narrowing

The Bluebeam data tells the story clearly: 94% of engineering firms already using AI plan to increase their investment. Those firms are pulling ahead in project delivery speed, proposal win rates, and capacity. For the 73% of AEC firms that have not started, every quarter of delay widens the competitive gap.

The firms that will thrive are the ones that approach AI integration the way they approach every other engineering project: with a sound methodology, clear phases, and the discipline to do the front-end work before the build.

We test everything in our own engineering practice before we bring it to clients. If you want to see how this 3-phase approach applies to your specific workflows, send me a message and I will share what we have learned.

Shane Chalupa is Co-Founder of Obnovit (OB-no-vit), where he helps engineering firms and technical businesses integrate AI through practical, engineering-first methodology. He is a licensed Professional Engineer with 15+ years of industrial engineering experience across manufacturing, energy, oil and gas, and chemical process industries.