Key Takeaways

- Most AI initiatives in engineering organizations fail because no one designs the transition from pilot to production. The technology works. The operating model around it does not.

- The average organization scraps 46% of AI proof-of-concepts before they reach production (Source: S&P Global Market Intelligence, 2025). This is an operating model problem, not a technology problem.

- Organizations that redesign workflows before deploying AI are nearly 3x more likely to see meaningful financial impact (Source: McKinsey, State of AI 2025). AI follows the workflow. The workflow does not follow the tool.

- A production-first methodology starts with identifying real constraints, not AI use cases. Map the workflow, simplify before augmenting, and apply AI where human judgment remains central.

- Integration and governance belong in phase one, not phase four. Pilots designed without production questions will not survive past the demo.

Last quarter, I sat in a conference room with the leadership team of an eight-figure distribution company. They had already run an AI pilot. The output looked promising. Their team was enthusiastic. But six months later, the pilot was sitting in a shared folder, untouched by daily operations. The question on the table was simple: why did this never turn into anything?

The answer was not about the AI. The pilot worked. The problem was that nobody had designed the bridge between “this looks useful” and “this is how we operate now.”

That distribution company is not unusual. According to S&P Global Market Intelligence’s 2025 survey of over 1,000 enterprises, the average organization scraps 46% of AI proof-of-concepts before they reach production (Source: S&P Global / CIO Dive, 2025). The share of companies abandoning most of their AI initiatives jumped to 42% in 2025, up from 17% the year before. Gartner’s research puts it even more bluntly: on average, only 48% of AI projects make it into production, and it takes eight months to get from prototype to deployment (Source: Gartner, May 2024).

This is not an AI problem. It is an operating model problem. Engineering organizations are good at evaluation and poor at institutionalization. AI exposes that gap faster than most technologies because it touches workflows, data, liability, and professional judgment simultaneously.

What follows is a production-first methodology for embedding AI into engineering workflows so it actually survives past the pilot phase.

The failure mode no one names

AI pilots fail when they are treated as experiments instead of as the first version of a production system.

After implementing AI across 40+ engineering projects, I have seen the same pattern repeat. The pilot gets run by one capable individual rather than the person who owns the workflow. Inputs are curated manually instead of pulled from the systems the team actually uses. Outputs get reviewed informally instead of through defined quality gates. Nobody defines how the work will be supported, updated, or audited after the initial excitement fades.

The result is a demo that proves possibility but avoids responsibility. It lives in a vacuum, disconnected from the real constraints of daily delivery.

The misconception that kills momentum

Leadership teams often believe that once a pilot demonstrates value, scaling is primarily a matter of access and training. In practice, the hardest work starts after the pilot succeeds.

Production use requires integration into existing systems (your CAD environment, your project management tools, your document control), repeatable inputs and outputs, clear ownership for maintenance, governance for data and review, and defined boundaries for where AI assists and where human engineering judgment applies.

Skipping this work is how promising pilots quietly die. And in engineering, where professional liability attaches to every stamped deliverable, the stakes of getting this wrong extend beyond wasted investment.

Why workflows must come first

McKinsey’s 2025 State of AI survey, covering nearly 2,000 organizations across 105 countries, found that only 6% of organizations qualify as “AI high performers,” meaning they report both significant value from AI and at least 5% of EBIT attributable to AI use. The practice most strongly correlated with that performance? Workflow redesign. High performers are nearly three times more likely than other organizations to have fundamentally redesigned their workflows before deploying AI (Source: McKinsey, State of AI 2025).

This finding matches what I see in the field. AI becomes operational only when it is embedded into a workflow that already has a defined owner, measurable throughput or quality constraints, documented inputs and outputs, and an existing review or approval mechanism. AI does not replace these structures. It depends on them.

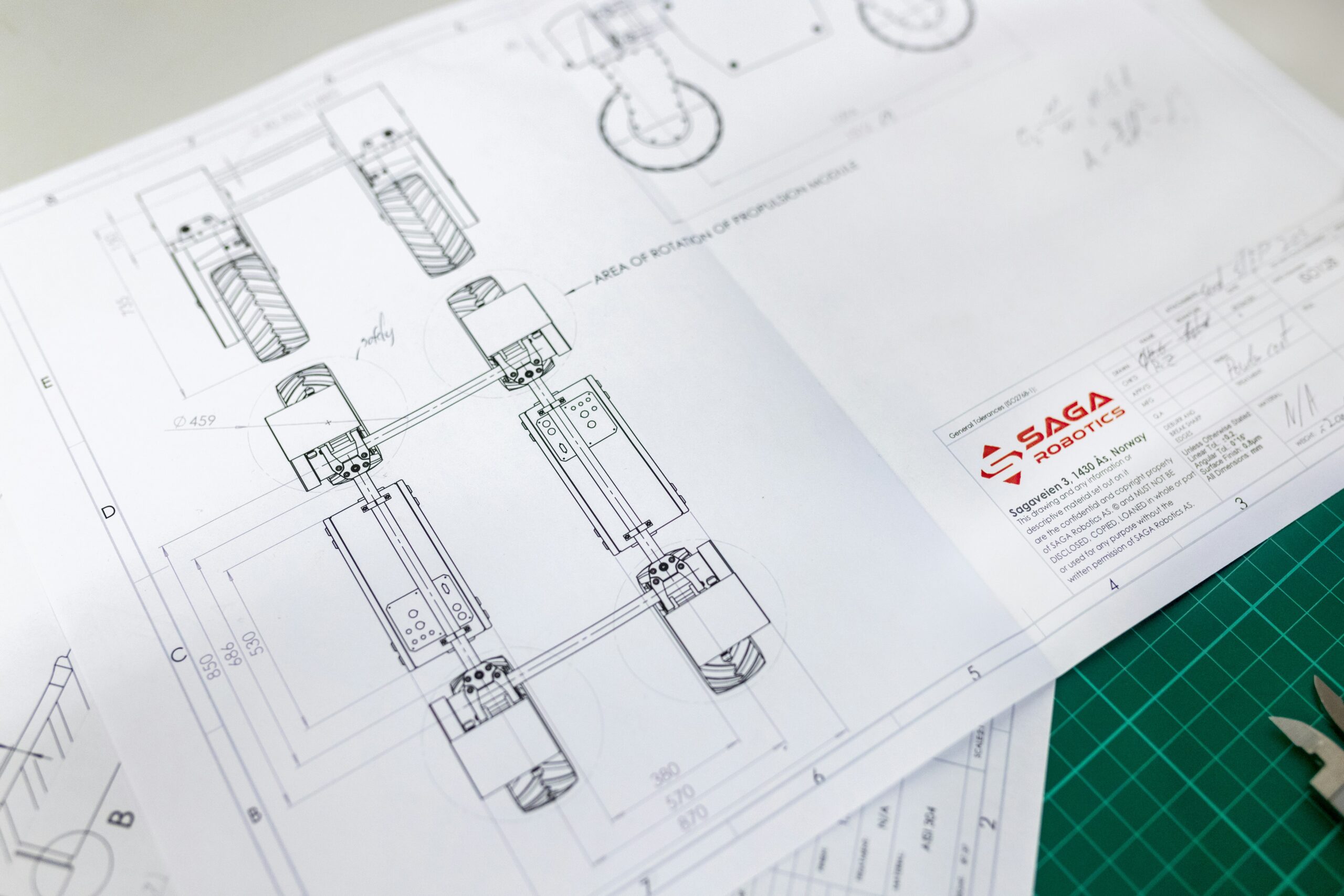

Think of it like upgrading a process control system. You would never install advanced instrumentation on a system that lacks P&IDs, defined setpoints, and calibration procedures. AI integration follows the same logic: the underlying process discipline has to exist first.

The four risks of stalled pilots

For engineering organizations, pilot-to-production failure creates risks that compound over time:

| Risk Type | What Happens | Why It Matters |

|---|---|---|

| Delivery risk | Teams experiment without improving throughput. Real constraints remain untouched. | Projects stay behind schedule while effort goes to pilots that produce demos, not capacity. |

| Governance risk | Unclear rules lead to inconsistent usage, shadow tools, and undocumented AI involvement. | Different team members use different tools differently, with no standard for quality or review. |

| Liability risk | AI-assisted outputs enter stamped deliverables without defined review or accountability. | Professional Engineering liability does not care whether a calculation error was human or AI-assisted. |

| Credibility risk | After one or two stalled pilots, leadership becomes skeptical of all future initiatives. | The organization develops “AI fatigue” and stops trying, even when the right opportunity appears. |

Avoiding these risks requires designing for production from week one, not treating production as a separate phase that comes after the pilot “works.”

The workflow-first operating model

The core principle is simple: AI follows the workflow. The workflow does not follow the tool.

Here is what I tell clients: do not start with “where can we use AI?” Start with “where is capacity actually constrained?”

Step 1: Identify the real constraint

In engineering organizations, constraints usually fall into one of three categories: documentation and coordination overhead that consumes senior engineering time, review and approval latency that creates bottlenecks in project delivery, or specialized knowledge concentrated in too few people, creating single points of failure.

If removing or accelerating a step would not change delivery speed, cost, or quality, it is not the constraint. Move on.

When we worked with an engineering-first industrial distributor, we started with this exact assessment. We clarified the company’s strategic posture (growth vs. sustain), then evaluated the three stacked business funnels: Marketing, Sales, and Delivery. Only after identifying where the true bottleneck lived did we discuss AI at all. The constraint was not where leadership initially expected it to be.

Step 2: Map the workflow at operating depth

High-level process diagrams are insufficient. You need to map what actually happens at the task level: who touches the work, what information is required at each step, where handoffs occur, where rework gets introduced, and which systems are involved.

This is where the engineering discipline most firms already have becomes their greatest asset. The same rigor you apply to process flow diagrams and P&IDs applies to mapping your operational workflows. The output exposes steps that should be eliminated before AI is even considered.

Step 3: Simplify before augmenting

Apply basic Lean thinking and Theory of Constraints analysis before introducing any technology. Remove steps that add no customer or regulatory value. Standardize inputs. Clarify acceptance criteria. Reduce unnecessary approval loops.

In our practice, we consistently find that 20-30% of workflow steps can be eliminated or simplified before AI enters the picture. AI should accelerate a clean workflow, not preserve a complicated one. Automating waste just produces waste faster.

Step 4: Apply AI where judgment remains human

Good AI candidates in engineering workflows share common traits:

| Characteristic | Good AI Candidate | Poor AI Candidate |

|---|---|---|

| Work type | Pattern-based, repetitive structuring | Novel engineering judgment calls |

| Quality standards | Clear, definable acceptance criteria | Subjective, context-dependent evaluation |

| Error consequence | Low consequence for first-draft errors | High consequence requiring PE-stamped accuracy |

| Review structure | Natural review points already exist | No formal review mechanism in place |

| Data availability | Consistent, accessible input data | Scattered, undocumented, or siloed information |

In engineering contexts, AI drafts, summarizes, checks, and structures. Engineers decide. Tools like Microsoft Copilot, Claude, and ChatGPT can accelerate documentation, streamline communication, assist with code and calculation verification, and structure technical reports. The professional judgment, the engineering stamp, and the client relationship stay with the engineer.

<!– INTERNAL LINK: Consider linking “AI drafts, summarizes, checks, and structures” to https://obnovit.com/services/ –>

Designing the pilot to survive production

A pilot should answer production questions from day one, not defer them.

When we led a half-day workshop with an eight-figure distribution company, the goal was not to “explore AI possibilities.” It was to translate strategy into a scoped, budgetable pilot with defined inputs, process steps, outputs, and a schedule. We mapped the bottlenecked workflow, applied Lean waste elimination and constraint analysis, evaluated whether each step should be eliminated, simply automated, or enhanced with AI, and then drafted a tight pilot scope with benchmark KPIs defined before implementation began.

From the start of any pilot, define these production elements:

- What system does the AI output feed into?

- Who reviews the output, and against what standard?

- What happens when the output is wrong?

- How is usage logged and auditable?

- How will the workflow be taught to the next person who needs to run it?

If these questions feel uncomfortable during the pilot, they will be fatal later. A pilot that cannot answer them is a demo, not a first version of a production system.

Measurement that survives budget review

If success cannot be explained in operational terms the engineering leadership team already uses, it will not survive the next budget conversation.

Before any pilot starts, establish baseline measurements: cycle time or effort per unit of work, error or rework rate, volume and frequency of the task, and review effort required. After implementation, measure the same variables. Avoid vague productivity claims. Tie results to delivery metrics your leadership already trusts.

In my practice, we define KPIs and projected ROI before any build begins, then monitor results through a dashboard. One client, a fleet services company, identified $3M in annual savings through this exact process of mapping constraints, measuring baselines, and implementing AI where the data supported it. That number came from measurement, not projection.

Integration and governance are not phase four

Integration and governance are part of phase one. This is one of the most common mistakes I see, and it is one of the most expensive.

From the outset, define:

- Approved AI environments: Microsoft Copilot, Claude, ChatGPT, or enterprise LLMs aligned to your tenant, with clear boundaries on which tools are sanctioned for which tasks

- Data classification rules: What data can interact with AI tools, and under what conditions

- Review expectations: Documented standards for human review of AI-assisted outputs, particularly for PE-stamped deliverables

- Disclosure requirements: Clarity on where AI assistance must be noted internally for audit and quality assurance purposes

Governance that gets added after adoption is already underway rarely achieves consistent compliance. Build the guardrails before the team starts driving.

Scaling only after stability

Here is what I tell clients: scaling should feel boring.

Do not scale when the workflow still requires constant support from the person who built it, when outputs vary significantly by user, when documentation is incomplete, or when review standards remain unclear.

Scale when results are consistent across users, ownership is defined and accepted, training materials exist and have been tested, and support requests have dropped to a manageable cadence.

The methodology is repeatable: identify the next constraint, map the workflow, simplify, apply AI where appropriate, measure, stabilize, scale. Then do it again. The eight-figure distribution company we worked with left the engagement with not just a single pilot, but a repeatable cadence for identifying the next bottleneck and running the same play across adjacent workflows.

How Obnovit approaches this

We work with engineering-driven organizations to turn AI from isolated pilots into operational capability using this exact methodology. That means starting with real workflow constraints, not AI tool demos. It means embedding Microsoft Copilot, Claude, ChatGPT, or enterprise LLMs into governed environments. It means designing human-in-the-loop review that protects professional judgment and PE liability. It means measuring outcomes in delivery terms, not enthusiasm. And it means progressing through our Crawl-Walk-Run-Sprint framework without skipping steps, because each phase builds the operational discipline the next phase depends on.

We test every framework and tool in our own engineering practice before recommending it to clients. When something works in our project delivery, it becomes part of the methodology. When something does not work, we fix it before it reaches anyone else. The focus is not AI adoption for its own sake. The focus is operational performance with engineering discipline.

The business outcome

When AI reaches production in engineering environments, the impact shows up in terms leadership already measures: reduced administrative drag on senior engineers who can redirect hours to billable design work, faster turnaround in documentation-heavy workflows, more consistent quality through standardized drafts and structured review, and clearer ownership and auditability of work products.

These outcomes compound over time because the organization builds capability, not just tools. The team learns to identify constraints, optimize workflows, and apply AI systematically. That capability persists and grows, even as the specific tools evolve.

The decision point

Pilot-to-production failure is not inevitable. It is a design choice.

You can treat AI as a series of disconnected experiments that fade out, or as an engineered capability that is owned, governed, and measured like any other part of your delivery system.

The firms seeing real results from AI are the ones that redesigned their workflows first. The data supports it. The methodology exists. The question is whether you build the bridge or keep running pilots that go nowhere.

Your next step: Download the free AI Capacity Calculator to identify where AI can save your team hours and pinpoint the workflow constraints worth solving first. If you are ready to talk specifics, start the conversation and we will identify two high-ROI use cases for your firm in 30 minutes.