In this article:

- The real risk is not AI itself. It is unmanaged AI usage happening outside your governance framework. A Microsoft/Censuswide study found 71% of employees have used unapproved consumer AI tools at work, and 51% do so weekly.

- Blocking AI tools without providing a sanctioned path does not eliminate usage. It pushes adoption underground where IT has zero visibility and zero control.

- Microsoft 365 Copilot operates within your tenant boundary with logical isolation, encryption, and role-based access controls, making it a strong fit for most Internal and Confidential data classes when properly configured.

- Four risk surfaces require active management: data risk, governance risk, cost/sprawl risk, and professional liability risk. Address all four before scaling.

- Start with one bottleneck workflow. Define data classification, access controls, human review protocols, and audit trail requirements, then pilot, prove KPIs, and scale.

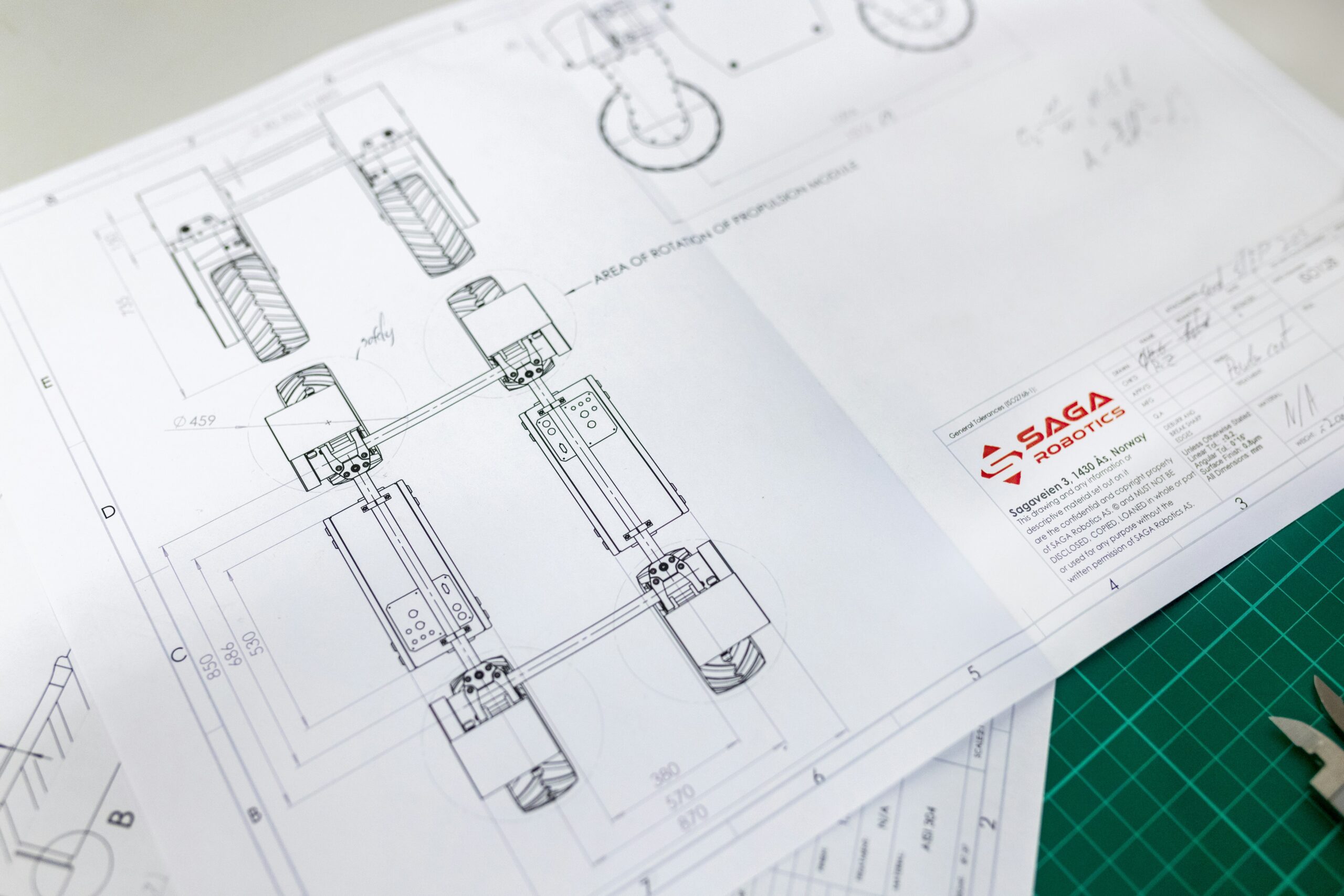

Last month, an engineer at a client firm pasted an entire set of client specifications into a free AI chatbot to speed up a technical summary. No malicious intent. No awareness of the exposure. The client’s proprietary design data was processed on a consumer platform with no enterprise data agreement, no retention controls, and no audit trail.

That is not a training failure. That is a governance gap. And it is happening at most engineering organizations right now, whether leadership knows it or not.

A 2025 Microsoft-commissioned study conducted by Censuswide found that 71% of UK employees have used unapproved consumer AI tools at work, with 51% doing so every week (Source: Microsoft/Censuswide, October 2025). Only 32% of those employees expressed concern about the privacy of company or customer data they entered into those tools.

Engineering organizations do not struggle with AI integration because the technology is weak. They fail because adoption happens outside the operating system of the business: no data classification, no access controls, no audit trail, no review protocol, no owner.

When that happens, the enterprise risk is predictable: sensitive project information flows into unapproved tools, outputs enter deliverables without documentation, and IT has no way to verify what happened after the fact.

The failure mode

AI adoption fails in two common ways:

Hard block with no sanctioned path: People still need speed, so they find workarounds. IT loses visibility and control. The Microsoft data confirms this: 28% of employees using shadow AI reported their company does not provide an approved alternative, leaving them no choice but to seek unauthorized tools.

Tool access with no operating model: Usage spreads fast, quality varies, and governance is bolted on after a near-miss.

In both cases, AI becomes a risk amplifier instead of an operational advantage.

What is breaking in real companies

What breaks is not “security” in the abstract. What breaks is operational control:

- Engineers do not have a simple way to know what data is safe to use with an LLM

- Approved tools are not mapped to data sensitivity or job role

- There is no consistent human review standard for AI-assisted work

- There is no audit trail that would survive a client question, regulator inquiry, or dispute

Engineering teams are then forced to choose between speed and compliance, which is the wrong tradeoff.

The misconception

Leaders often treat AI as either:

- A consumer chat tool that must be blocked, or

- A magic productivity layer that will “just help” once licenses are purchased

Both assumptions ignore the reality of engineering delivery: controlled documents, liability, traceability, and review chains.

The reality

What works is boring and repeatable:

- Pick an enterprise-grade LLM pathway (often Microsoft 365 Copilot or enterprise version of ChatGPT, Claude, etc.)

- Define data classes and allowed usage

- Embed AI into specific workflows with checkpoints

- Require human review and documentation

- Log activity at the level your industry and clients require

- Pilot in one bottleneck workflow, then scale

This is how you turn AI into an operational capability instead of unmanaged experimentation.

The risk

Engineering organizations must manage four risk surfaces:

1) Data risk

LLM inputs can include client specs, drawings, contract language, bid strategy, internal pricing, and proprietary methods. The risk is not theoretical. The risk is uncontrolled data movement and unclear retention.

2) Governance risk

Without policy tied to enforcement, “guidance” becomes optional. If you cannot monitor usage and enforce boundaries, you do not have governance.

3) Cost and sprawl risk

Uncontrolled tool sprawl creates duplicated spend, inconsistent outputs, and vendor risk that procurement cannot assess.

4) Professional liability risk

If AI-assisted content enters calculations, design decisions, or client deliverables without documentation and review, you create defensibility problems. Engineering judgment remains human, and your operating model must prove it.

The operational model: how to embed AI into engineering workflows

Treat AI like any engineered system: define requirements, constrain interfaces, verify outputs.

Step 1: Establish a simple data classification for LLM use

Use four classes that engineers can apply quickly:

- Public: Safe for broad AI use

- Internal: Routine business content, allowed in approved enterprise tools

- Confidential: Client data, proprietary methods, competitive info, restricted to private tenant controls

- Restricted: Regulated or client-prohibited data, no external processing unless explicitly approved

Step 2: Map approved tools to data class

Do not approve “AI tools.” Approve data paths.

Microsoft 365 Copilot and enterprise LLMs inside your tenant typically fit Public and Internal use, and in many configurations, Confidential as well. Here is why: M365 Copilot operates within your organization’s Microsoft 365 service boundary. According to Microsoft’s own documentation, all prompts, data retrieval, and generated responses remain within the Microsoft 365 service boundary. Your tenant provides logical isolation from other organizations through Microsoft Entra authorization and role-based access control. Data is encrypted at rest and in transit. Copilot inherits your existing SharePoint permissions, sensitivity labels, and compliance policies. It does not use your data to train foundation models. (Source: Microsoft Learn, “Data, Privacy, and Security for Microsoft 365 Copilot”)

For organizations already on Microsoft 365 with SharePoint, you are already operating within a tenant-isolated, enterprise-grade cloud environment with encryption, access controls, and data residency commitments. This is not technically “private cloud” in the infrastructure sense, but the security architecture provides logical isolation, audit capability, and data governance controls that satisfy most Confidential-class requirements when properly configured with Microsoft Purview sensitivity labels, restricted SharePoint search, and appropriate access policies.

For Restricted data, specifically information that is contractually prohibited from any external cloud processing, M365 Copilot may or may not be sufficient depending on your specific client agreements, regulatory requirements, and how your tenant is configured. Some organizations will need on-premise or private cloud deployments for their most sensitive workloads. The right answer comes from reading your contracts and compliance obligations, not from a blanket policy.

Step 3: Define role-based access and usage boundaries

- Who can use Copilot or enterprise LLMs

- For which workflows

- With which data classes

- With what review and documentation requirements

Provisioning and revocation must follow your existing identity and access management practices.

Step 4: Require human review protocols for engineering-impacting outputs

Define review rules that match your practice:

- Technical accuracy review, not just “does it read well”

- Reviewer competency appropriate to the deliverable

- Documented approval chain for anything client-facing or safety-relevant

Step 5: Audit trail and records that match your industry

At minimum, maintain:

- Approved tool list and configuration

- Usage logging appropriate to your risk profile

- Retention rules

- Workflow-level documentation showing where AI assisted and who verified

This is what makes AI defensible.

Step 6: Run a narrow pilot in a real bottleneck workflow

Pick one workflow where delay, rework, or coordination is costing you margin or schedule. Define:

- Inputs and allowed data classes

- Process steps and checkpoints

- Outputs and acceptance criteria

- KPIs tied to operational performance (cycle time, rework rate, turnaround time, delivery quality)

Then scale only after controls and KPIs hold.

What this looks like in practice

One client recently engaged Obnovit for a Half-Day AI Jumpstart training session with their newly formed AI Council, followed by a 3-week Roadmap Ramp engagement. During the Roadmap phase, one of the most significant workstreams was reviewing their existing AI governance policy, identifying gaps in data classification, access control, and audit trail coverage, and delivering recommended upgrades to bring the policy in line with their actual risk profile.

The work did not stop at the document. We trained their AI Council on the inherent risks and deployment options for enterprise LLMs, then worked alongside their Council and IT team to guide LLM selection based on their specific privacy, security, and governance requirements. From there, we helped map internal documentation and tool ecosystems to their governance policy, aligning data flows with the classification framework outlined in this article.

The result: their AI adoption moved from informal, unmanaged experimentation to a governed, auditable capability with leadership alignment, IT support, and a clear path to scale.

This is the difference between “we bought Copilot licenses” and “we have an operating model for AI.”

Deployment choices: match architecture to sensitivity

Three deployment patterns are common. The right choice depends on your client contracts, regulatory obligations, and data sensitivity, not preference.

| Deployment Pattern | Best For | Data Classes | Key Tradeoffs |

|---|---|---|---|

| On-premise or local models | Strict data residency, air-gapped environments, highly regulated workloads | Restricted, Confidential | Full control, higher infrastructure cost, limited model capability, internal maintenance burden |

| Private cloud or tenant-isolated deployment (e.g., Azure OpenAI in dedicated tenant, M365 Copilot with Purview controls) | Strong control with cloud scalability, most engineering firm use cases | Confidential, Internal | Enterprise-grade isolation, data residency compliance, requires proper configuration and governance |

| Standard enterprise SaaS (e.g., ChatGPT Team/Enterprise, Claude Team/Enterprise) | Lower sensitivity workflows where classification and enforcement are mature | Internal, Public | Fastest to deploy, lowest infrastructure cost, less granular control, verify data retention policies |

Key decision factors: Review your client contracts for data processing restrictions. Check your industry compliance requirements. Assess your IT team’s capacity to configure and maintain controls. Start with the deployment pattern that covers your highest-volume workflows while meeting your governance requirements, then layer in additional patterns for edge cases.

The Obnovit layer: what fixes this in practice

Obnovit helps engineering-driven organizations turn AI from confusion into measurable operational capacity by embedding AI into real workflows with governance, security, and discipline.

In practice, that means:

- Workflow selection based on real bottlenecks, not “AI everywhere”

- A governance model that engineering and IT can both sign off on

- Microsoft 365 Copilot and enterprise LLM enablement aligned to your tenant, data classes, and audit needs

- Human-in-the-loop review protocols that protect professional liability

- Pilot design with KPIs, then scale through a repeatable Crawl, Walk, Run, Sprint progression

The business outcome

When AI is implemented as a governed operational capability, engineering organizations typically see:

- Faster turnaround in documentation-heavy workflows

- Reduced rework from better first-pass drafts and structured review

- Improved delivery consistency through standardization

- Less tool sprawl and clearer vendor risk posture

- Higher engineering capacity applied to engineering judgment, not admin churn

The outcome is not “more AI.” The outcome is better operational performance with controlled risk.

What to do next

If you want a governance-safe path for Microsoft 365 Copilot and enterprise LLMs that engineering leaders and IT can both support, build a narrow pilot around one bottleneck workflow and put classification, access control, review, and audit trail in place before scale.

Ready to move from unmanaged AI experimentation to a governed operating model? Book a 30-minute strategy call and we will identify the one bottleneck workflow where a governed AI pilot would create the fastest ROI for your firm, plus walk away with two high-impact use cases you can act on immediately.