The AI shift is already here for engineering power teams, and it is mostly invisible until you feel it in throughput.

Not because anyone is asleep at the wheel. Because the change does not announce itself. It shows up as a quiet reduction in friction across dozens of small, screen-based tasks.

This is not a job story.

This is a systems story.

When the underlying capability of the tools crosses a threshold, the best teams stop arguing about novelty and start redesigning how work flows through the organization. That is where we are now.

What changed

For a long time, AI was useful, but brittle.

You could get a decent draft, a rough summary, or a starting point. Then a human had to wrestle it into shape, correct mistakes, hunt for sources, and reassemble the output into something you would actually ship.

That meant AI was a helper, not a force multiplier.

The shift over the last year is that the best models are now strong enough to complete multi-step knowledge work with far fewer hand-holds. They can sustain context longer, follow constraints more reliably, and produce outputs that look like a first pass from a solid teammate.

Not a genius. Not infallible.

But usable.

That changes the engineering economics of time.

If the first draft of a real deliverable goes from hours to minutes, the bottleneck moves. Reviewing, validating, and deciding becomes the constraint, not producing the initial artifact.

Engineering leaders should treat that as a design change to the system, not a new app to trial.

Why engineering power teams feel this first

Power teams have a few traits in common.

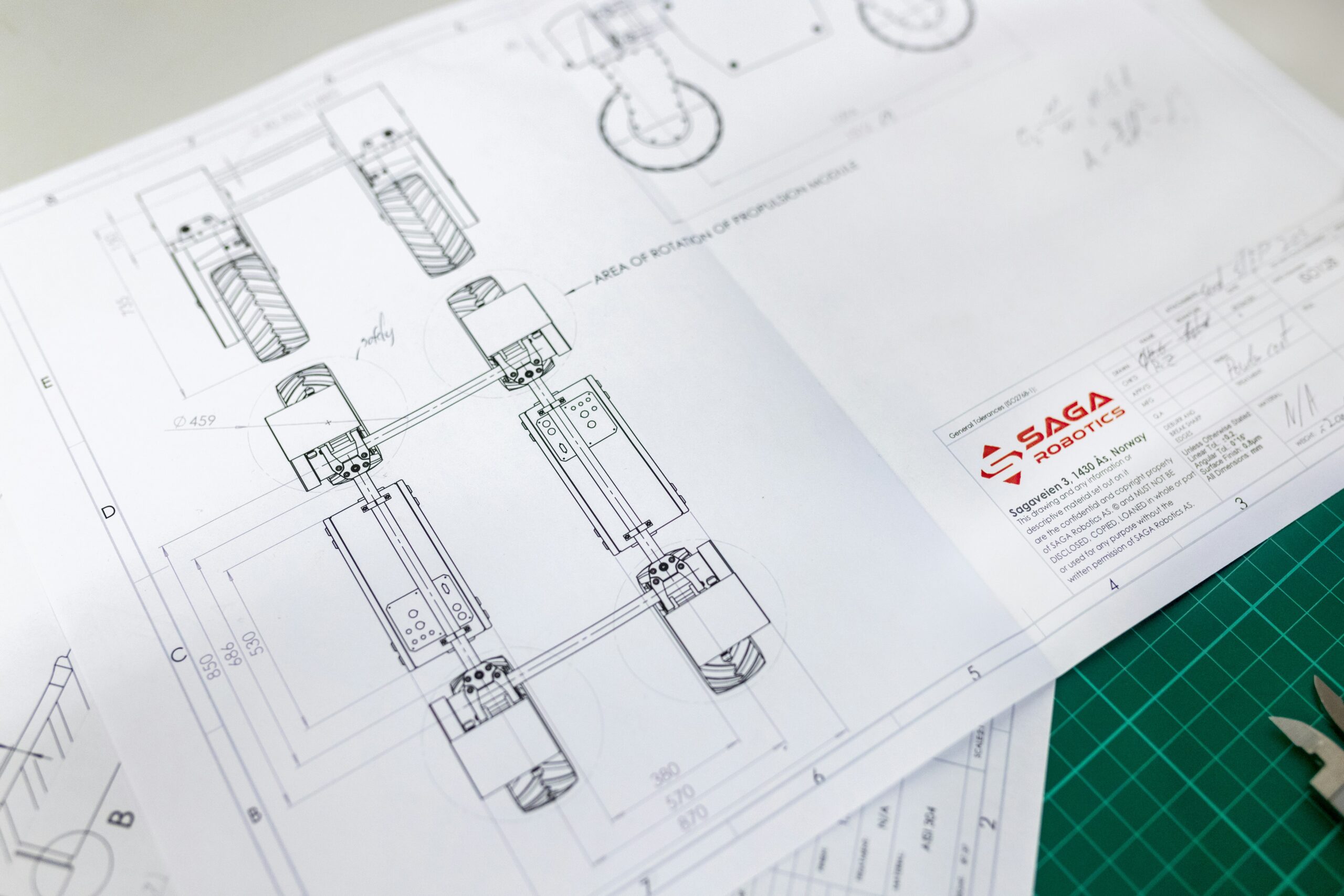

They run on repeatable artifacts. They communicate through written constraints. They live inside documentation, standards, drawings, submittals, calculations, specs, schedules, and change logs.

They also operate in environments where errors carry cost, risk, and reputation.

That combination is important.

AI is strongest where the work is:

- Mostly on a screen

- Constraint heavy

- Repeatable

- Reviewable

- Backed by reference material

Engineering delivery fits that profile. So do the supporting functions around it, like project controls, quality, procurement coordination, estimating, and technical sales support.

The opportunity is not “let AI do engineering.”

The opportunity is “remove low leverage drafting and synthesis work so engineers spend more time on judgment, risk, and decisions.”

The real leverage is not prompts, it is workflow

Most teams start with prompts because that is the obvious interface.

Then they get inconsistent results and conclude the tech is not ready.

That is like buying a new instrument and blaming it for a noisy signal because you never calibrated the loop.

Engineering power teams win when they move from prompting to commissioning.

Commissioning means you define the duty, define acceptance criteria, verify the source inputs, install checks, and only then scale.

If you want a mental model, treat AI like a junior engineer with extreme speed and broad knowledge, plus a known failure mode: it can confidently produce plausible nonsense when it is missing context.

Your job is to remove the missing context and install QA gates.

What AI can take off your plate right now

This is the part most people underestimate because they picture AI as a chatbot.

In practice, the biggest wins are not “ask it a question.” The wins are “push a real artifact through a controlled pipeline.”

Here are high frequency examples where engineering teams are already seeing cycle-time compression when they set the workflow up correctly:

- Turning meeting notes into action registers with owners, due dates, and open items

- Drafting RFIs and reviewing inbound RFIs for scope, contradictions, and missing information

- Summarizing vendor submittals against spec requirements, including redlines and exception logs

- Producing first pass method statements, ITPs, and QA checklists aligned to your templates

- Creating basis-of-design drafts from scattered inputs, then mapping assumptions and gaps

- Building technical comparison tables for alternates and equivalents, with traceability back to sources

- Generating project controls narratives, progress summaries, and stakeholder updates from structured data

- Creating onboarding briefs for new engineers joining midstream, including “what matters” context

Notice the pattern.

These are not creative writing tasks. They are synthesis and translation tasks, turning messy inputs into structured outputs.

That is exactly where the friction lives in most engineering organizations.

A before and after that will feel familiar

Consider a common scenario: a PM or discipline lead needs to respond to an RFI or an owner question that touches several documents.

Before:

- Search email threads, specs, meeting minutes, and prior RFIs

- Pull excerpts into a draft response

- Rewrite for clarity and tone

- Ask a senior reviewer to sanity check

- Iterate because the first draft missed key constraints

- Send, then handle follow-on questions because the response did not preempt the next issue

This can burn half a day, sometimes more, and it scales poorly.

After, with a commissioned workflow:

- Feed the model the approved source set for that project, not the whole internet, not a vague memory

- Constrain the output to your response format, including required fields, assumptions, and references

- Require citations back to the source documents for every claim

- Route the draft through a review gate, like you would a calc package or a submittal

- Log the response and its source links so the next similar question is faster

Now the human time shifts from hunting and drafting to reviewing and deciding.

This does not reduce accountability. It increases it, because your response becomes more traceable and consistent.

That is what engineering power teams should optimize for.

The four failure modes to expect, and design around

If you want to stay calm and operator-like about AI, you need to treat its failures as engineering constraints.

Here are the common failure modes, plus the control that mitigates each one.

1) Missing context

Failure: plausible output that is wrong because it did not have the right inputs.

Control: lock the source of truth, constrain the model to that source set.

2) Unclear acceptance criteria

Failure: output that is not usable because “good” was never defined.

Control: define “good” in writing, use templates, use examples.

3) No verification step

Failure: errors slip through because speed creates false confidence.

Control: add a QA gate with explicit checks, require citations, use checklists.

4) Scaling before stability

Failure: the team tries to automate ten workflows at once, then abandons it.

Control: one bottleneck at a time, prove the metric, then expand.

None of these are exotic.

They are the same categories of failure you already manage in project delivery: inputs, specs, QA, and controlled rollout.

Crawl, Walk, Run, Sprint for engineering power teams

Most AI initiatives stall because the team starts at Sprint with no instrumentation.

A practical progression looks like this.

Crawl: One task, one team, one metric

Pick a weekly bottleneck that is mostly screen-based and has a clear done definition.

Examples: RFI drafts, submittal exception logs, meeting action registers, or progress narratives.

Define one metric that matters:

- Cycle time

- Rework rate

- Review time

- Throughput per engineer

- Response consistency

Then run the task through a simple, repeatable process for two to four weeks.

Walk: Standardize inputs and outputs

Once you see a repeatable win, standardize:

- Approved source folders

- Templates

- Output formats

- Review checklists

- Naming conventions

- Version control

This is where most of the leverage comes from. Standardization makes the tool reliable.

Run: Add guardrails and governance

Now you install the controls that keep quality stable when volume rises:

- Role-based access to source repositories

- Required citations for claims

- Redline workflows

- Sign-off rules

- Audit trails for sensitive deliverables

- A clear policy for what can and cannot be run through the system

This is not bureaucracy. It is how power teams protect quality while increasing velocity.

Sprint: Expand to adjacent workflows

Only after the first workflow is stable do you expand.

A common Sprint sequence for engineering teams is:

- Meeting notes to action register

- RFI drafting and review

- Submittal compliance and exception logging

- Technical comparisons for alternates

- Progress reporting and stakeholder comms

- Onboarding briefs and knowledge transfer packs

By Sprint, you are not using AI. You are redesigning delivery.

Where humans stay essential

Calm operator warning means saying this clearly.

Engineering is not just typing.

Engineering is judgment under constraints.

AI can accelerate drafting, synthesis, and first pass analysis. It can help surface contradictions, missing information, and edge cases. It can help you explore options faster.

But humans remain responsible for:

- Risk decisions

- Scope interpretation

- Safety and compliance accountability

- Tradeoffs across cost, schedule, and constructability

- Relationship-driven alignment with clients and stakeholders

- The final sign-off that carries liability

If your team confuses fast output with correct decision, you will lose trust.

If your team uses AI to get to better decisions faster, you will gain trust.

That is the line.

A simple readiness check for engineering leaders

If you want general awareness without hype, use this check to diagnose whether your team is positioned well.

- Identify the bottleneck

Name the task that steals the most expert time each week. - Define “good”

Write acceptance criteria in plain language. Include examples of good and bad. - Control inputs

Decide what documents are allowed. Organize them. Version them. Make them accessible. - Install a QA gate

Create a checklist. Require citations. Make review explicit and fast. - Track a metric

If the metric does not move, change the workflow, not the tool.

This is the difference between teams that dabble and teams that compound gains.

What to tell your team

If you lead a power team, your message should not be fear, and it should not be hype.

It should be this:

We are going to use these tools like engineers.

We will test, measure, standardize, and scale only when quality is stable.

We are not replacing judgment. We are removing friction so judgment is applied where it matters.

That stance keeps people grounded and keeps you moving.

The calm operator conclusion

AI is now strong enough to change engineering throughput when it is integrated into a controlled workflow.

You do not need to bet the company. You do not need to overhaul everything.

You need one bottleneck, one metric, and a commissioning mindset.

The teams that treat this like a production tool, with standards and QA, will quietly pull away.

The ones that treat it like a novelty will stay busy, not effective.